#44 Classic episode - Paul Christiano on finding real solutions to the AI alignment problem

80,000 Hours Podcast

Rebroadcast: this episode was originally released in October 2018.

Paul Christiano is one of the smartest people I know. After our first session produced such great material, we decided to do a second recording, resulting in our longest interview so far. While challenging at times I can strongly recommend listening — Paul works on AI himself and has a very unusually thought through view of how it will change the world. This is now the top resource I'm going to refer people to if they're interested in positively shaping the development of AI, and want to understand the problem better. Even though I'm familiar with Paul's writing I felt I was learning a great deal and am now in a better position to make a difference to the world.

A few of the topics we cover are:

• Why Paul expects AI to transform the world gradually rather than explosively and what that would look like

• Several concrete methods OpenAI is trying to develop to ensure AI systems do what we want even if they become more competent than us

• Why AI systems will probably be granted legal and property rights

• How an advanced AI that doesn't share human goals could still have moral value

• Why machine learning might take over science research from humans before it can do most other tasks

• Which decade we should expect human labour to become obsolete, and how this should affect your savings plan.

• Links to learn more, summary and full transcript.

• Rohin Shah's AI alignment newsletter.

Here's a situation we all regularly confront: you want to answer a difficult question, but aren't quite smart or informed enough to figure it out for yourself. The good news is you have access to experts who *are* smart enough to figure it out. The bad news is that they disagree.

If given plenty of time — and enough arguments, counterarguments and counter-counter-arguments between all the experts — should you eventually be able to figure out which is correct? What if one expert were deliberately trying to mislead you? And should the expert with the correct view just tell the whole truth, or will competition force them to throw in persuasive lies in order to have a chance of winning you over?

In other words: does 'debate', in principle, lead to truth?

According to Paul Christiano — researcher at the machine learning research lab OpenAI and legendary thinker in the effective altruism and rationality communities — this question is of more than mere philosophical interest. That's because 'debate' is a promising method of keeping artificial intelligence aligned with human goals, even if it becomes much more intelligent and sophisticated than we are.

It's a method OpenAI is actively trying to develop, because in the long-term it wants to train AI systems to make decisions that are too complex for any human to grasp, but without the risks that arise from a complete loss of human oversight.

If AI-1 is free to choose any line of argument in order to attack the ideas of AI-2, and AI-2 always seems to successfully defend them, it suggests that every possible line of argument would have been unsuccessful.

But does that mean that the ideas of AI-2 were actually right? It would be nice if the optimal strategy in debate were to be completely honest, provide good arguments, and respond to counterarguments in a valid way. But we don't know that's the case.

The 80,000 Hours Podcast is produced by Keiran Harris.

Next Episodes

#33 Classic episode - Anders Sandberg on cryonics, solar flares, and the annual odds of nuclear war @ 80,000 Hours Podcast

📆 2020-01-08 07:27 / ⌛ 01:25:11

#17 Classic episode - Will MacAskill on moral uncertainty, utilitarianism & how to avoid being a moral monster @ 80,000 Hours Podcast

📆 2019-12-31 17:28 / ⌛ 01:52:39

#46 Classic episode - Hilary Greaves on moral cluelessness & tackling crucial questions in academia @ 80,000 Hours Podcast

📆 2019-12-23 23:24 / ⌛ 02:49:12

#67 – David Chalmers on the nature and ethics of consciousness @ 80,000 Hours Podcast

📆 2019-12-16 22:00 / ⌛ 04:41:50

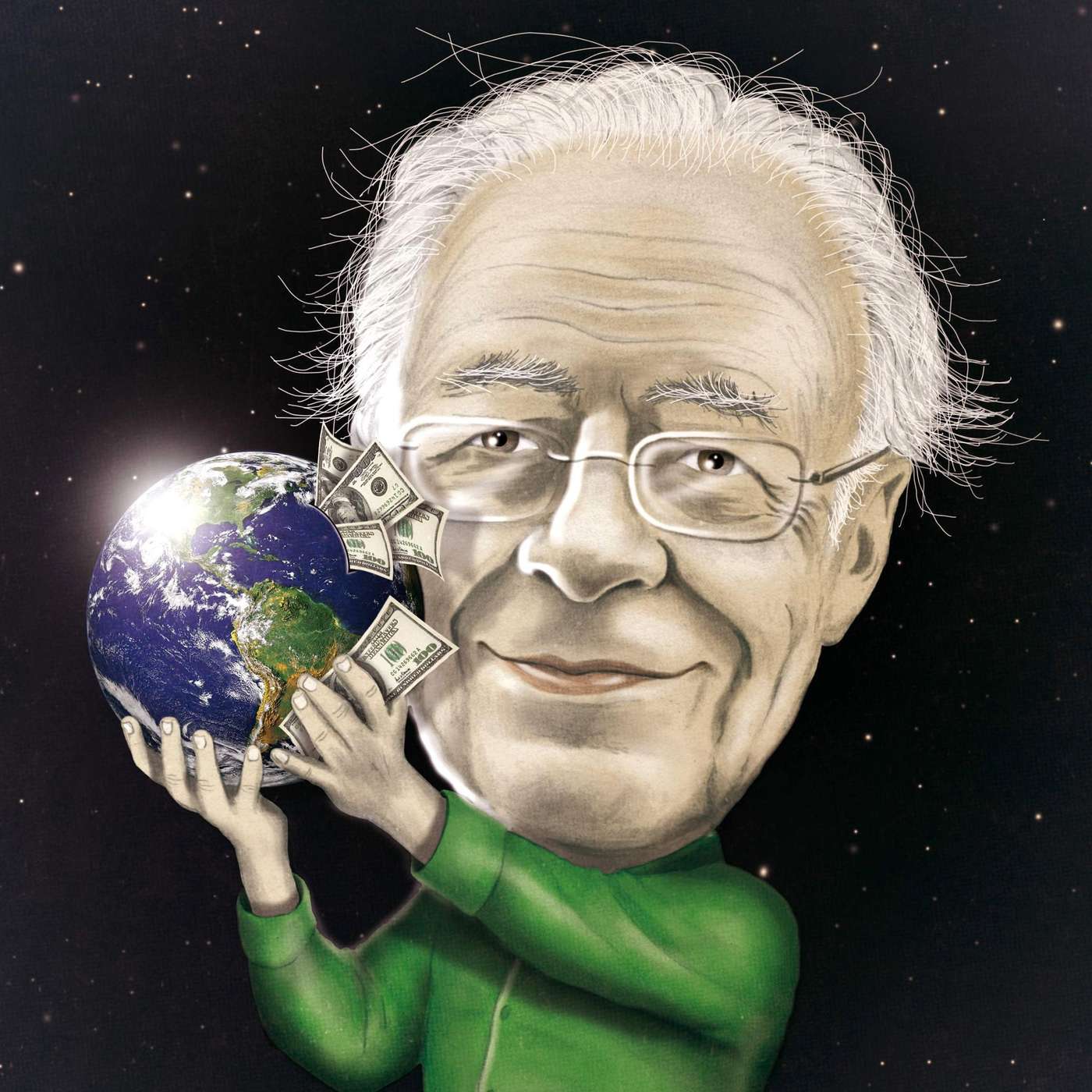

#66 – Peter Singer on being provocative, effective altruism, & how his moral views have changed @ 80,000 Hours Podcast

📆 2019-12-05 16:58 / ⌛ 02:01:21